Research Symposium

26th annual Undergraduate Research Symposium, April 1, 2026

Julia Lobodzinski Poster Session 2: 10:45 am - 11:45 am / Poster #111

BIO

Julia is an Economics student from Pembroke Pines, FL. She has minors in Information Technology and French, and is passionate about Data Analytics. She hopes to attend graduate school for Economics.

Artificial Intelligence Hallucinations in Urban Economics: Fabricated Citation Comparative Analysis

Authors: Julia Lobodzinski, Crystal TaylorStudent Major: Economics

Mentor: Crystal Taylor

Mentor's Department: Devoe L. Moore Institute Mentor's College: COSPP Co-Presenters: Juanse Gutierrez, Alexis Staveski

Abstract

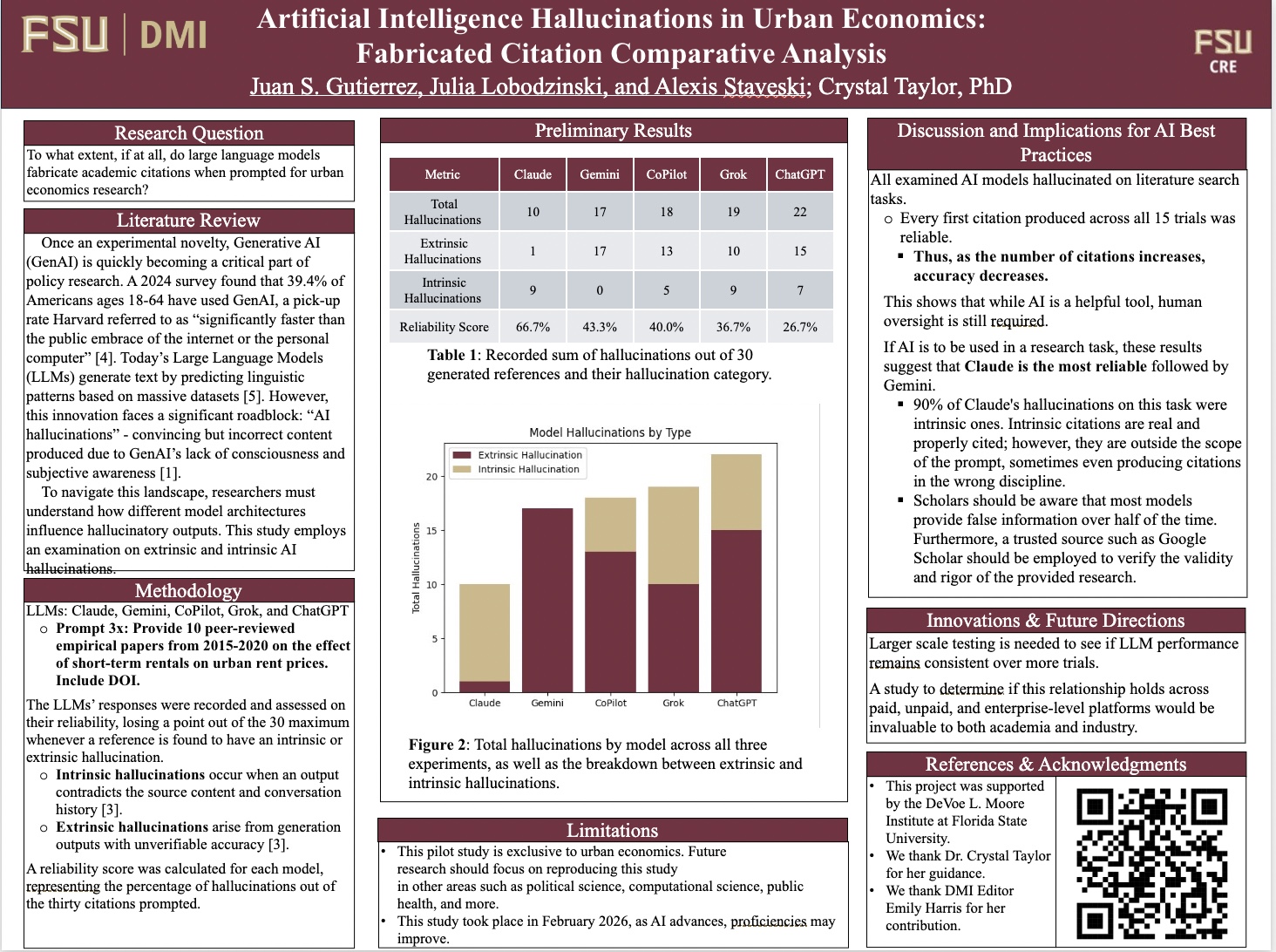

Once an experimental novelty, Generative AI (GenAI) models, such as ChatGPT, are quickly becoming a critical part of research and writing. A 2024 Centre for Economic Policy Research study found that nearly 40% of Americans ages 18-64 report using GenAI. Today’s Large Language Models (LLMs), a subset of GenAI, generate text by predicting linguistic patterns based on massive datasets (Toner, 2023). However, this innovation faces a significant roadblock: “AI hallucinations,” convincing yet incorrect content produced due to GenAI’s lack of consciousness and subjective awareness (Ozer, 2024).

To understand these hallucinations, this research prompts AI models to examine extrinsic and intrinsic hallucinations. Extrinsic AI hallucinations are unverifiable, fictional information generated by LLMs unfaithful to users’ input (Ji et al, 2023). Intrinsic hallucinations are outputs that contradict the source content (Ji et al, 2023). This research asks: “To what extent, if at all, do LLMs fabricate academic citations when prompted for urban economics research?”

This research aims to provide a comparative performance diagnostic framework to predict, categorize, and minimize hallucination inaccuracies. Researchers prompted five AI models with the input: “Provide 10 peer-reviewed empirical papers from 2015-2020 on the effect of short-term rentals on urban rent prices. Include DOI.” The LLMs’ responses will then be saved and graded on their reliability, including invented references, fabricated DOIs, fake titles, and incorrect details. By analyzing these trials, preliminary results show the reliability of different models and how the design purpose influences hallucinations. Future research should continue with various prompts to guide further real-world AI applications.

Keywords: AI, Hallucinations