Research Symposium

26th annual Undergraduate Research Symposium, April 1, 2026

Sanjana Penmetcha Poster Session 1: 9:30 am - 10:30 am / Poster #159

BIO

Sanjana Penmetcha is a sophomore at Florida State University pursuing a Bachelor of Science in Finance and Management Information Systems. She is interested in the intersection of technology and finance and how data-driven tools can be used to better understand financial markets and support more informed investment decision-making. Through the Undergraduate Research Opportunity Program, Sanjana conducts research under the mentorship of Dr. Narendra Bosukonda, examining how audio characteristics in corporate earnings calls may influence investor perception and financial decision-making.

In addition to her research, Sanjana is currently an intern at a wealth management firm, where she supports financial planning work while gaining exposure to the operations of an investment management practice. She also enjoys participating in consulting case competitions through Florida State’s business case club. After completing her undergraduate studies, she hopes to build a career in the finance industry and eventually pursue an MBA.

Creating a Machine Learning Model using an Earnings Call Analysis

Authors: Sanjana Penmetcha, Dr. Narendra BosukondaStudent Major: Finance & Management Information Systems

Mentor: Dr. Narendra Bosukonda

Mentor's Department: Persis E. Rockwood School of Marketing Mentor's College: Herbert Wertheim College of Business Co-Presenters:

Abstract

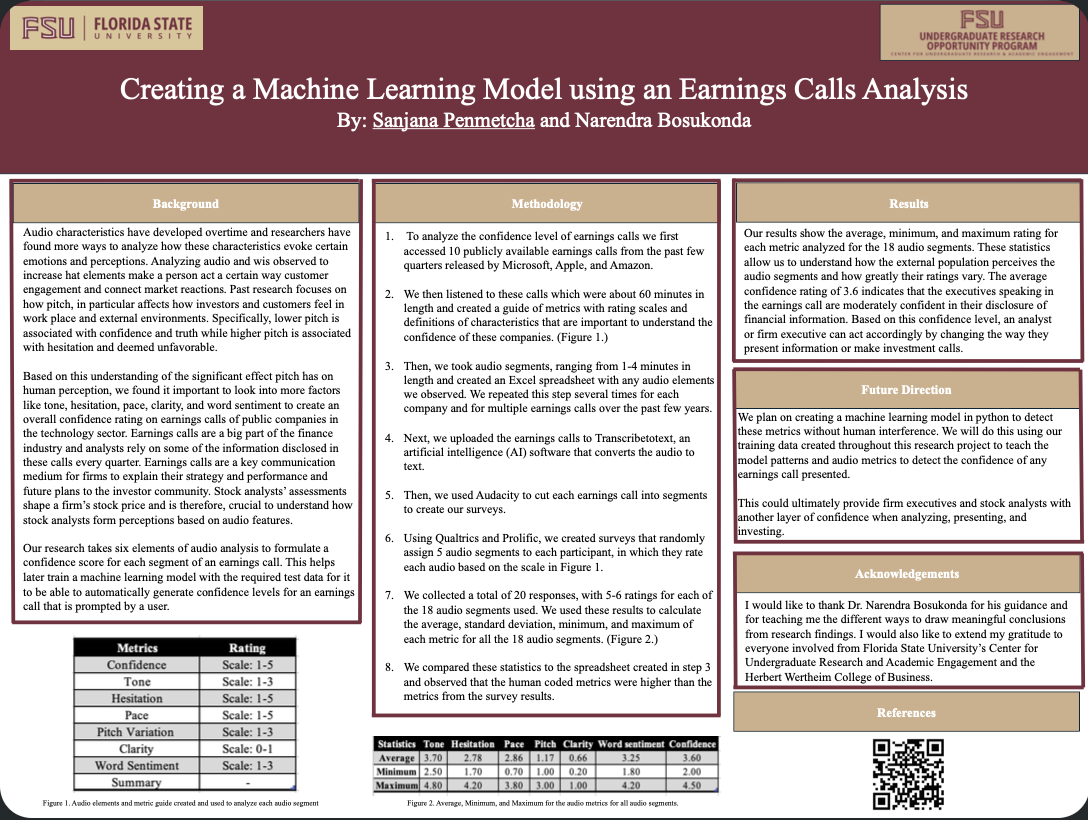

Tone and numerous other audio features can affect human perception and the decision-making process. We chose to investigate this in the context of firm financial reporting. Specifically, by examining how audio features of firm executives during earnings calls impact stock analyst judgements about firm financial performance. To analyze the voluminous audio data and identify the correct features that impact analyst judgment, we plan to develop a machine learning model that can measure the different audio features from earnings calls.

As a first step, we built a manually labeled data set, which is necessary to teach the model to learn patterns of an earnings call. Each earnings call was divided into audio segments ranging from two to four minutes and human-coded based on tone, hesitation, pace, pitch variation, clarity, and word sentiment. Next, we validated this data with ratings from multiple coders, collected using a survey. For each audio segment, we calculated the average, variance, and standard deviation based on survey results.

The data analysis shows that executives who have a stable pitch and pause less are associated with more favorable investor reactions. However, more pauses, high-pitched variation, and negative word sentiment can be associated with uncertainty and weaker reactions. The next steps include integrating the audio segments into Python to program the first version of the model.

Overall, the result of our analysis provides the basis to create a working machine learning model that assesses the confidence of executives and provides an additional signaling mechanism to investors in capital markets.

Keywords: Earnings Call Analysis, Finance, Machine Learning, Investor Sentiment, Financial Disclosure Analysis