Research Symposium

26th annual Undergraduate Research Symposium, April 1, 2026

Malachi Davey Poster Session 2: 10:45 am - 11:45 am / Poster #140

BIO

Malachi Davey is a Computer Science student at Florida State University from Orlando, Florida. His research investigates the security of Graph Neural Networks, focusing on model extraction attacks and defenses that protect sensitive information in machine learning models. His interests include artificial intelligence, security, and data analysis. He plans to pursue a career in developing secure and trustworthy artificial intelligence systems.

Model Extractions Attacks & Defenses

Authors: Malachi Davey, Dr. Yushun DongStudent Major: Computer Science

Mentor: Dr. Yushun Dong

Mentor's Department: Computer Science Mentor's College: College of Arts and Sciences Co-Presenters:

Abstract

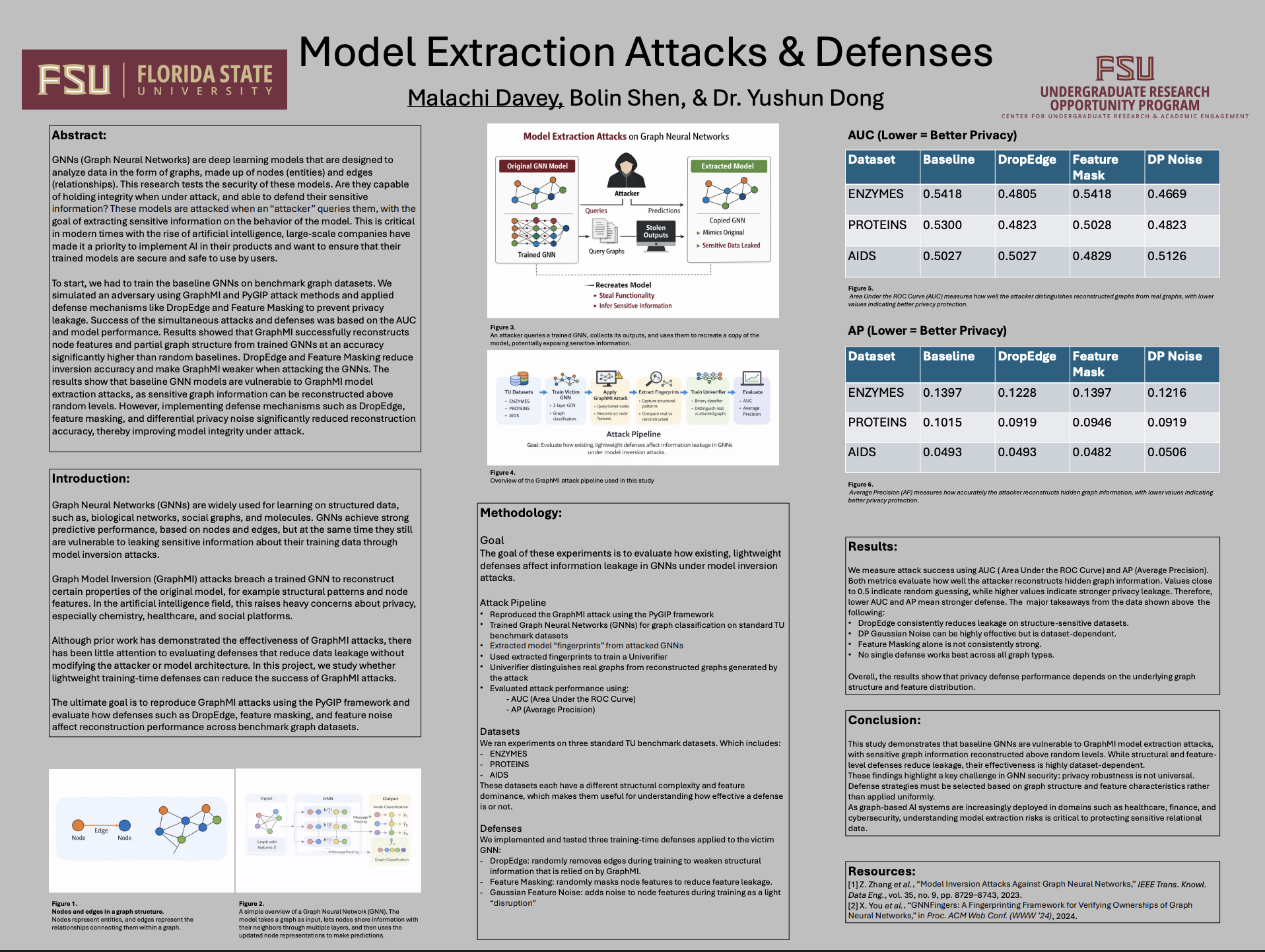

GNNs (Graph Neural Networks) are deep learning models that are designed to analyze data in the form of graphs, made up of nodes (entities) and edges (relationships). This research tests the security of these models. Are they capable of holding integrity when under attack, and able to defend their sensitive information? These models are attacked when an “attacker” queries them, with the goal of extracting sensitive information on the behavior of the model. This is critical in modern times with the rise of artificial intelligence, large-scale companies have made it a priority to implement AI in their products and want to ensure that their trained models are secure and safe to use by users. To start, we had to train the baseline GNNs on benchmark graph datasets. We simulated an adversary using GraphMI and PyGIP attack methods and applied defense mechanisms like DropEdge and Feature Masking to prevent privacy leakage. Success of the simultaneous attacks and defenses was based on the AUC and model performance. Results showed that GraphMI successfully reconstructs node features and partial graph structure from trained GNNs at an accuracy significantly higher than random baselines. DropEdge and Feature Masking reduce inversion accuracy and make GraphMI weaker when attacking the GNNs. The results show that baseline GNN models are vulnerable to GraphMI model extraction attacks, as sensitive graph information can be reconstructed above random levels. However, implementing defense mechanisms such as DropEdge, feature masking, and differential privacy noise significantly reduced reconstruction accuracy, thereby improving model integrity under attack.

Keywords: Machine Learning, Computer Science, AI Privacy