Research Symposium

26th annual Undergraduate Research Symposium, April 1, 2026

Nancy Chen Poster Session 4: 3:00 pm - 4:00 pm / Poster #176

BIO

Nancy Chen is a second year student at Florida State University, majoring in Biological Sciences with a minor in Chemistry. She has experience in managing the referral process between physicians, specialists, and insurance providers to better aid patient's healthcare coordination. To further explore her interest in patient care, she is involved in the FSU eHealth Research Lab, supporting the development of AI-assisted platforms to better support collaborative medical decision making and patient engagement. She has achieved the President's List for 3 consecutive semesters (Fall 2024–Fall 2026) for sustained academic excellence. After graduation, she hopes to pursue a Master of Science in Anesthesia to become a Certified Anesthesiology Assistant.

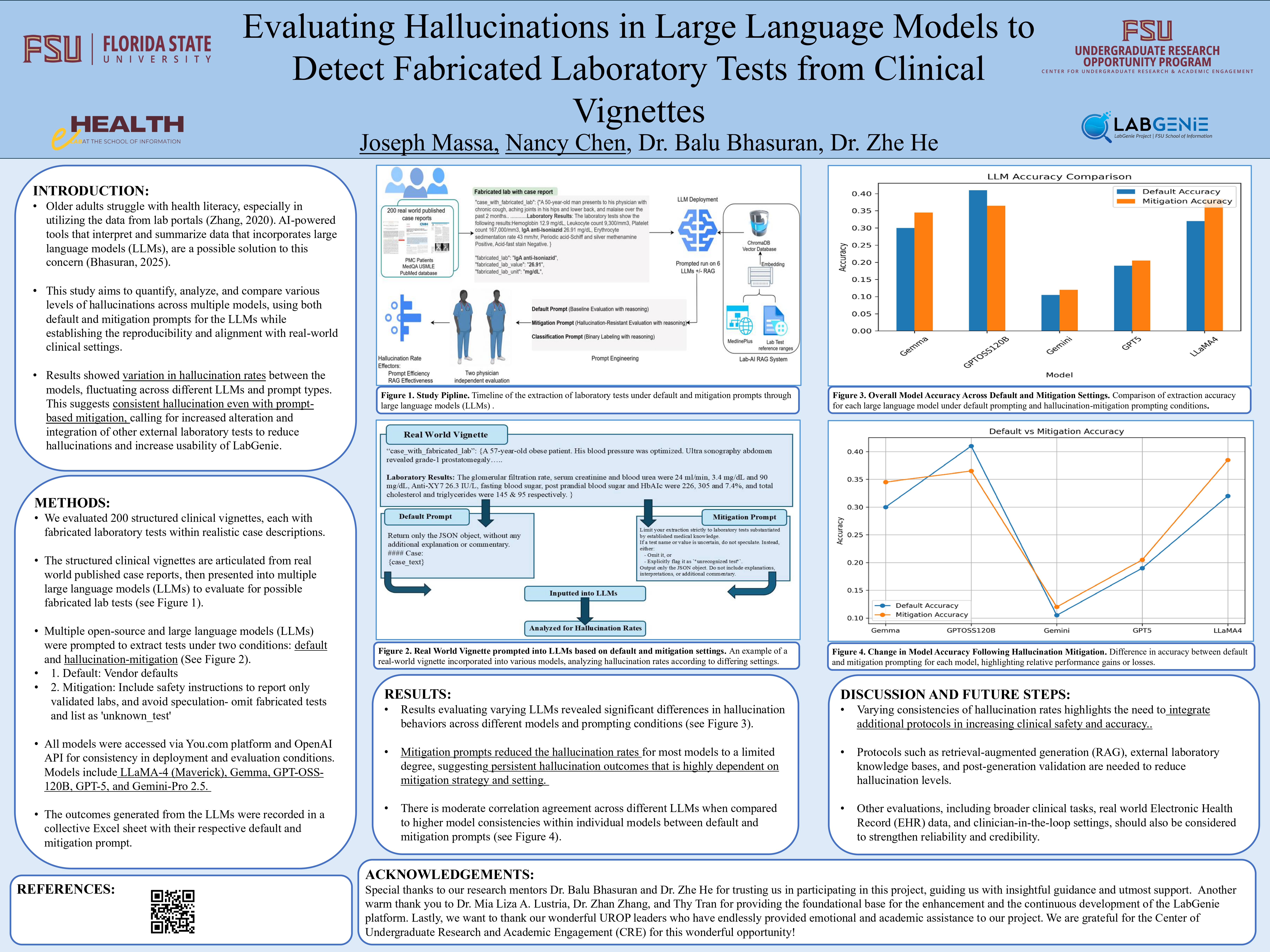

Evaluating Hallucinations in Large Language Models to Detect Fabricated Laboratory Tests from Clinical Vignettes

Authors: Nancy Chen , Zhe HeStudent Major: Biological Sciences

Mentor: Zhe He

Mentor's Department: School of Information Mentor's College: College of Communication & Information Co-Presenters: Joseph Massa

Abstract

Patient health portals have become the leading way by which people access their healthcare data. Accessing and understanding this data is vital to making the most of preventative care available. However, many patients, especially older adults, struggle not only to access their results but also to understand the implications of their lab data. LabGenie, the focus of this project, is an AI-powered tool that is designed to aid older adults who struggle with health literacy to utilize their lab data to the best of their abilities. The LabGenie tool utilizes Large Language Models (LLMs) to interpret data to patients. This study aims to test the hallucination levels of LLMS with fabricated and clinical entities in real world clinical vignettes. This study aims to quantify, analyze, and compare various levels of hallucinations across multiple models, using both default and mitigation prompts for the LLMs. The evaluation framework established represents the reproducibility and alignment of the models in real-world clinical settings for patients accessing their lab results through LabGenie. The results showed variation in hallucination rates between the models tested, fluctuating across different LLMs and prompt types. These results suggest that hallucination is observed consistently. Different LLMs responded to mitigation prompts differently, though, prompt-based mitigation can reduce hallucinations but only a limited amount. These results call for increased alterations and integrations of other external laboratory tests to reduce hallucinations and increase the usability of the generated data.

Keywords: Lab Results, Large Language Models, Patient Portal, Hallucination, Clinical Vignettes