Research Symposium

26th annual Undergraduate Research Symposium, April 1, 2026

Yug Patel Poster Session 1: 9:30 am - 10:30 am / Poster #183

BIO

Yug Patel is a first-year honors student at Florida State University from Tallahassee, majoring in psychology. Yug plans to pursue medical school while also exploring research opportunities based on Yug's undergraduate experiences. Currently, Yug is working on a project comparing the computational performance of Python and Julia on Graphics Processing Units (GPUs) under professor Raghav Gn. at the FAMU-FSU College of Engineering. Outside of academics, Yug likes to play chess and spend time outdoors.

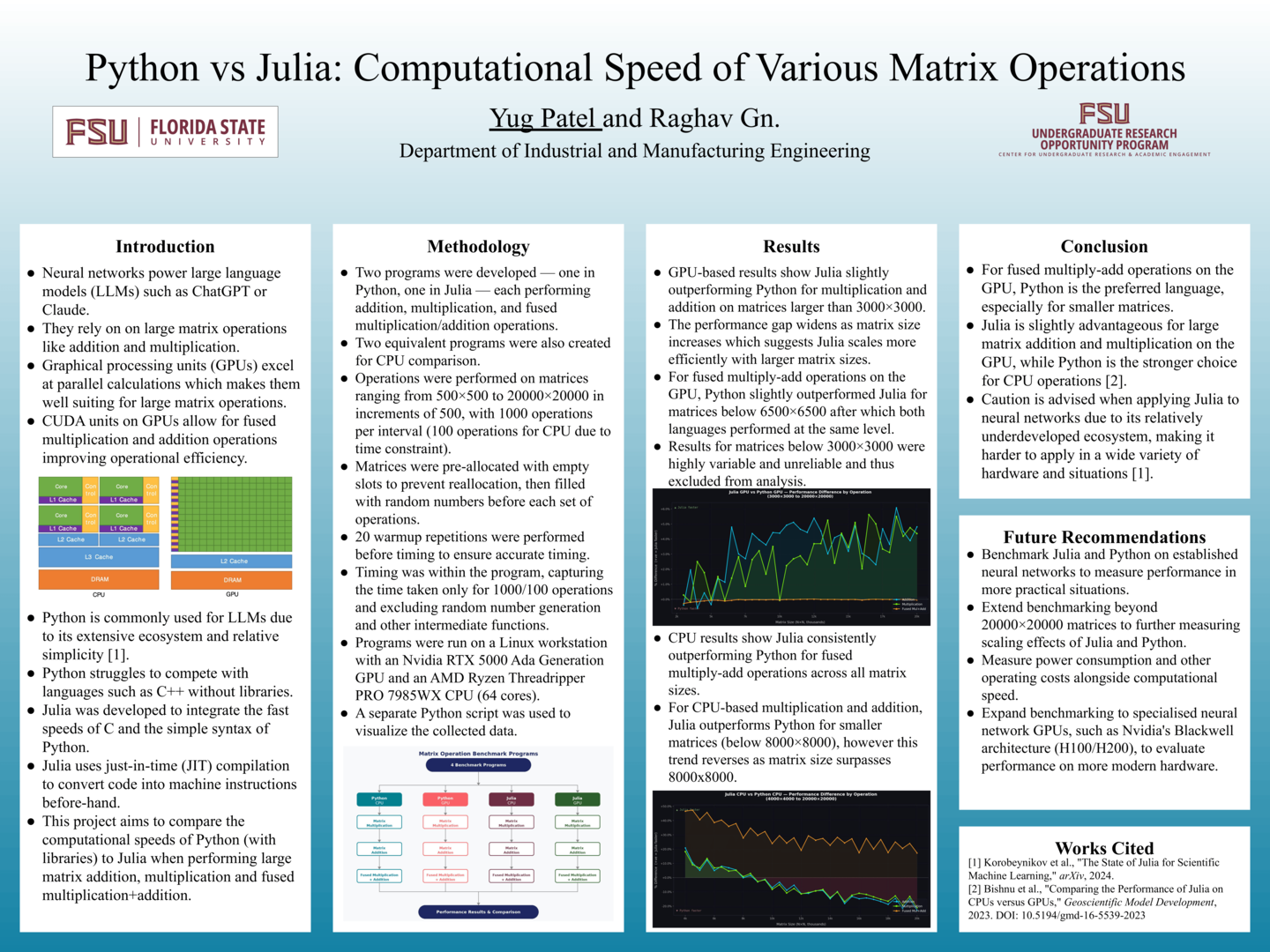

Python vs Julia: Computational Speed of Various Matrix Operations

Authors: Yug Patel, Raghav Gn. (Gnanasambandam)Student Major: Psychology

Mentor: Raghav Gn. (Gnanasambandam)

Mentor's Department: Industrial and Manufacturing Engineering Mentor's College: FAMU-FSU College of Engineering Co-Presenters: N/A

Abstract

Julia is a programming language designed to combine Python’s simplicity with the computational speed of C and similar languages. With the rise of neural networks (which make use of large matrix operations) in machine learning, this project looks at Julia’s performance compared to Python’s on CPU and GPU-based matrix operations such as addition, multiplication and fused multiply–add. GPUs were chosen for their ability to parallelize many simple operations using thousands of cores, including CUDA cores capable of performing multiplication and addition in a single clock cycle (fused multiply-add).

1000 repetitions of matrix operations on sizes ranging from 500×500 to 20000×20000 in 500 size increments using randomly generated values between 0 and 1 on an Nvidia RTX 5000 Ada Generation GPU and an AMD Ryzen Threadripper PRO 7985WX CPU. Results show Julia outperforming Python by 1–5% for addition, 2–6% for multiplication beyond sizes of 2500×2500 on the GPU. For fused multiply-add, Python and Julia essentially performed the same. Smaller matrices were excluded due to high variance and unreliability. CPU-based tests indicate Python performs better for addition and multiplication above 8,000×8,000 but struggles to compete in fused multiply-add.

These findings suggest that Julia could offer performance gains for large matrix operations, though further research is needed to determine whether these advantages exist in full neural network implementations, where Python’s mature ecosystem with libraries like CUDA and PyTorch could provide additional benefits and improved reliability. It would also be wise to test on more modern GPU’s such as Nvidia’s H200.

Keywords: Python, Neural, GPU, Julia, Programming