Research Symposium

26th annual Undergraduate Research Symposium, April 1, 2026

Autumn Thomas Poster Session 3: 1:45 pm - 2:45 pm / Poster #291

BIO

Autumn is a second year student at Florida State University. She is currently pursuing a Bachelors of Science in Psychology, with minors in Communications and Business. She works under Dr. Michael Kaschak and Nelu Radpour, M.S. in their Cognitive Psychology lab. She aims to earn a graduate degree in either Clinical Psychology or Sport Psychology, on her track to becoming a licensed Sport Psychologist.

What's That Sound: How Humans vs. AI Hear Shapes in Words

Authors: Autumn Thomas, Nelu RadpourStudent Major: Psychology

Mentor: Nelu Radpour

Mentor's Department: Department of Psychology Mentor's College: College of Arts and Sciences Co-Presenters:

Abstract

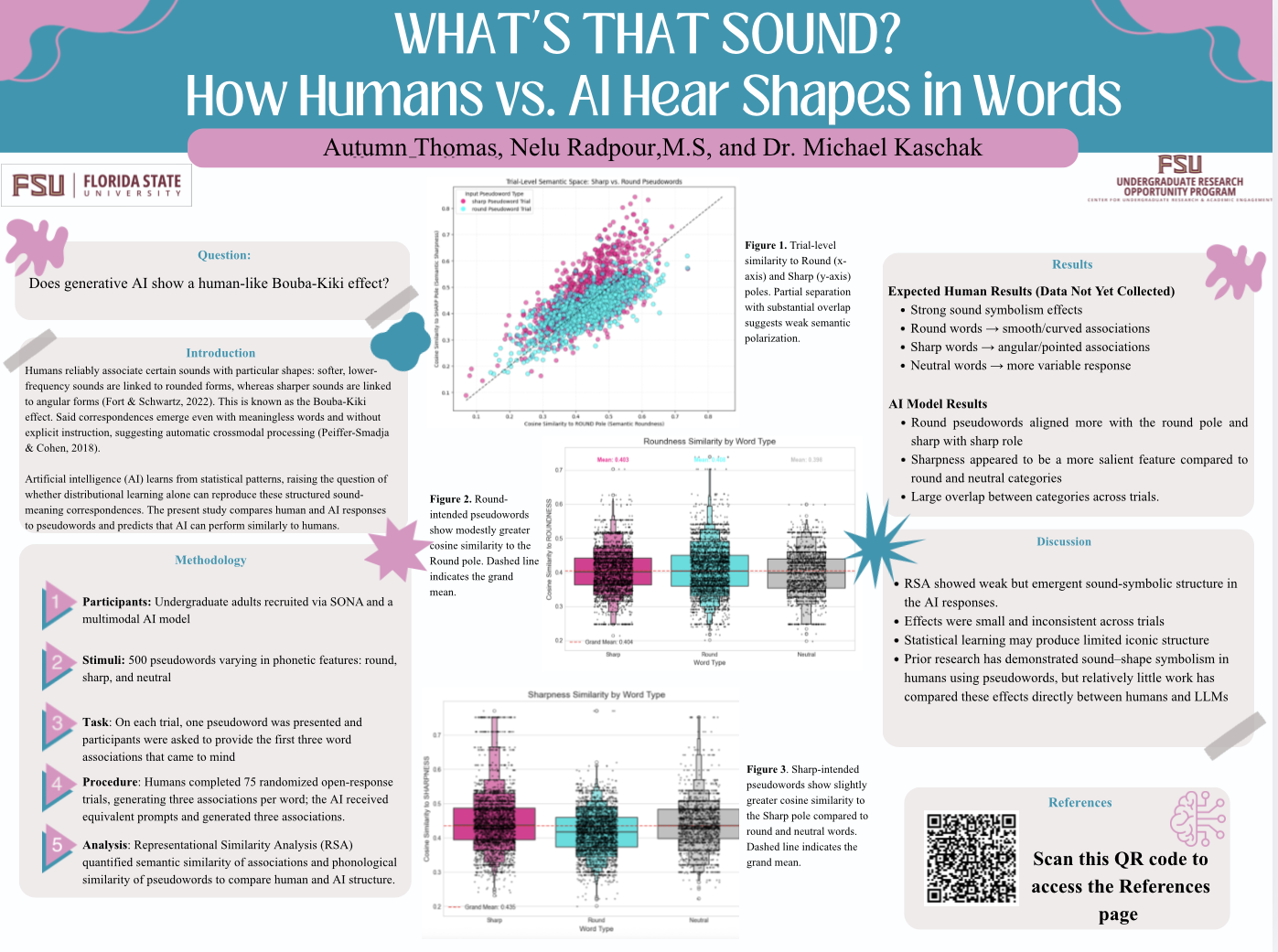

Sound-shape symbolism reveals systematic mappings between phonetic structure and semantic meaning, raising questions about whether such correspondences arise from innate perceptual mechanisms or statistical learning. The present study compared associations generated by human participants and artificial intelligence (AI) to examine whether large language models (LLMs) exhibit human-like sound-meaning structure. Humans appear to have an innate “gut feeling” for sound symbolism, automatically matching sounds to shapes based on perceptual and articulatory experience. In contrast, AI does not “feel” the shape of a sound. Although advanced models can guess correct matches through large-scale statistical learning, they lack the embodied sensory systems that make these correspondences natural for humans.

In the present study, undergraduate participants generated three associations to pseudowords (made up words with no real meaning) varying in round, sharp, or neutral phonetic properties. LLMs completed the same task. Representational Similarity Analysis (RSA) quantified phonological and semantic similarity within and between human and AI-generated responses.

Humans are expected to show strong within-category semantic clustering and robust phonology–semantics alignment, reflecting automatic cross-modal correspondences. In contrast, AI showed small but statistically significant differences in semantic alignment across sharp, round, and neutral-sounding pseudowords (p < .01). However, effect sizes were modest and reliability across trials was low, indicating weak and non-polarized sound-symbolic structure within the model.

These findings contribute to debates on embodied cognition, sound-symbolic meaning, and the limits of statistical language models.

Keywords: cognition, AI, Bouba-Kiki, pseudoword, LLM, sound-shape symbolism