Research Symposium

26th annual Undergraduate Research Symposium, April 1, 2026

Joseph Massa Poster Session 4: 3:00 pm - 4:00 pm / Poster #176

BIO

Joseph is a Public Health major from Pembroke Pines, Florida. He is interested in Public Health as it applies to community health and health administration. His research interests lie in optimizing patient services and bettering patient care.

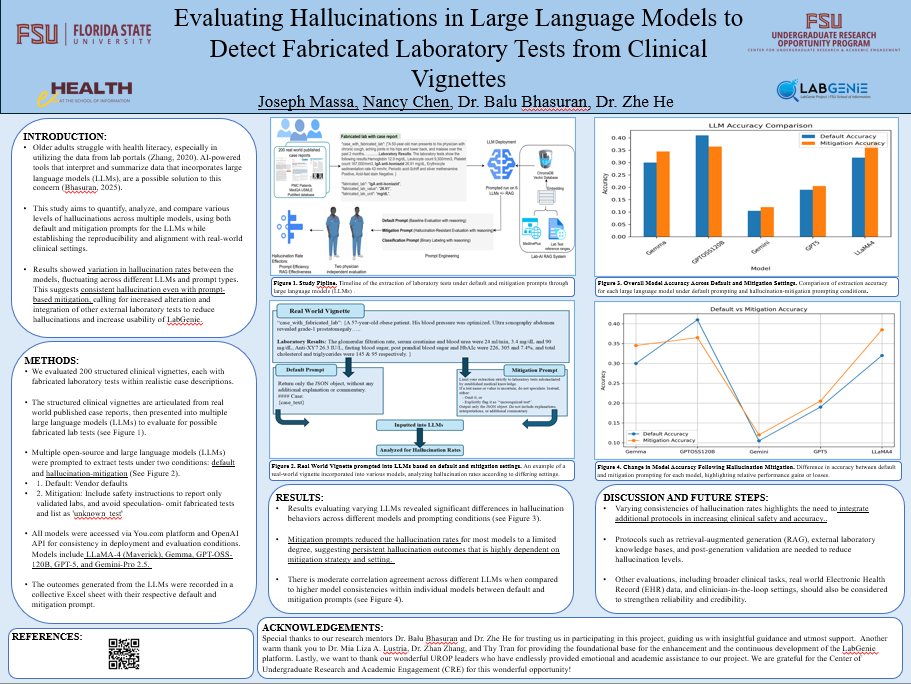

Evaluating Hallucinations in Large Language Models to Detect Fabricated Laboratory Tests from Clinical Vignettes

Authors: Joseph Massa, Dr. Zhe HeStudent Major: Public Health

Mentor: Dr. Zhe He

Mentor's Department: College of Communication and Information Mentor's College: Beijing University Co-Presenters: Nancy Chen

Abstract

Patient health portals have become the leading way by which people access their healthcare data. Accessing and understanding this data is vital to making the most of preventative care available. However, many patients, especially older adults, struggle not only to access their results but also to understand the implications of their lab data. LabGenie, the focus of this project, is an AI-powered tool that is designed to aid older adults who struggle with health literacy to utilize their lab data to the best of their abilities. The LabGenie tool utilizes Large Language Models (LLMS) to interpret data to patients. This study aims to test the hallucination levels of LLMS with fabricated and clinical entities in real world clinical vignettes. This study aims to quantify, analyze, and compare various levels of hallucinations across multiple models, using both default and mitigation prompts for the LLMs. The evaluation framework established represents the reproducibility and alignment of the models in real-world clinical settings for patients accessing their lab results through LabGenie. The results showed variation in hallucination rates between the models tested, fluctuating across different LLMs and prompt types. These results suggest that hallucination is observed consistently. Different LLMS responded to mitigation prompts differently, though, prompt-based mitigation can reduce hallucinations but only a limited amount. These results call for increased alterations and integrations of other external laboratory tests to reduce hallucinations and increase the usability of the generated data.

Keywords: AI, Health Data, Bioinformatics, Public Health